Our Lives with AI in 2040: Part I

Shit's going to get real. Real weird. And not always for the better.

This is installment 4 of chemo-steroid inspired writing. Last time we followed the rabbit hole into the 1930s with British fighter design and the ecosystem and conditions which created modern Formula 1. As a logical follow up, this week we’re going to ponder our lives with artificial intelligence (AI) in 2040. This exploration will be in three pieces over the next few weeks.

Part I: What 2040 might look like after the AI Singularity.

Part II: What trends and current developments suggest that 2040 will look like the six cuts in Part I?

Part III: How we might prepare our kids (and ourselves) for such a world? What screens are good for kids? What games are good for kids? How do we be on the right side of these trends, and not be victims of AI and the future digital world?

In more colloquial urgent terms:

Part I: Artificial Intelligence (AI) is coming. Shit is going to get real. Real. Weird. And not always for the better.

Part II: People, companies and governments will amass more processing power to get more control. They don’t always have our best interests at heart, if ever. Here are some stepping stone clues to why 2040 might get real weird. I know this sounds like libertarian fear-mongering, but let’s look at some recent examples.

Part III: We still want our kids to be alive and well in 2040, and not addicted victims of Meta and Instagram. Going cold turkey and being a Luddite is not the answer. We need to guide them (and ourselves) now so they don’t have to figure this out on their own with their friends in a basement with booze and other substances.

PART I:

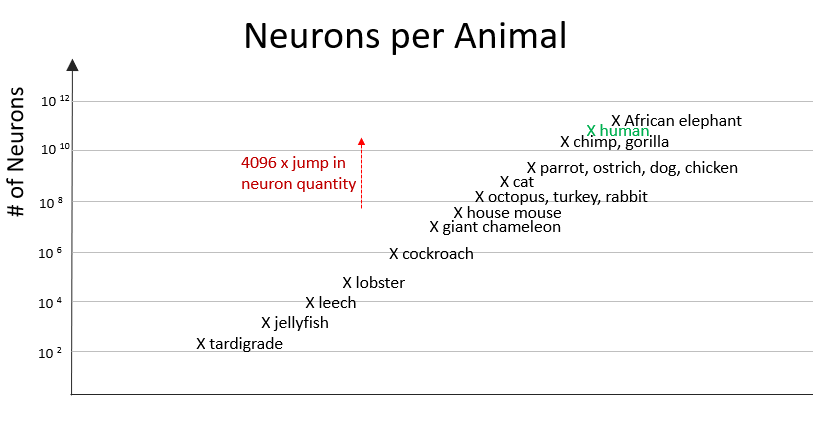

Let’s take six cuts of what life might look like in 2040. Why 2040 you might ask? Because that's when the singularity is predicted to happen. What is the singularity you speak of? In short, it is when humans will have created enough artificial intelligence to surpass us. Science fiction writers and technologists have been talking about this since the 1970s. Most of them predict that it will happen sometime between 2040 and 2060. No one is sure what will happen when it comes. The exact year is less important. I believe it will happen when our kids are adults and most of us are still alive. Some people think that humans will evolve to the next level, aided by technology. Others think that AI will evolve and kill us in our sleep before we even know it. Others think it is a nothing burger hoax, and that we will soon reach physical and material design limits in processor development before supercomputer can become powerful enough to run AI. The truth is somewhere in the middle. I expect it will take most humans by surprise… probably a majority of us, and it will make a small set of humans extraordinarily powerful. For now let's think of the singularity as computers that are a lot more powerful. In the next 18 years, if Moore's Law continues where computers double in processing power every 1.5 years (as they did from 1961 to 2011), we're looking at computers that are 4096x more powerful in 2040 than today. The human brain has 8.6 x 10^10 neurons. If we were to have a conversation with an animal with 4000x less neurons, we’d be talking to a mouse. Probably with a broom in hand.

It is neither here nor there, but mice (and rats) are incredibly intelligent and adaptable as a species, despite having three orders of magnitude less neurons. They’ve been able to evolve parasitically with humans and take advantage of our technical and industrial progress. As humans shifted from hunter gatherers to subsistence farmers, mice realized it was easier to live by agricultural fields and grain stores. As humans settled in cities, mice found even higher densities of food storage and waste to live off of. As humans explored across the oceans, European mice hitchhiked on these ships. As a colony, and species, mice are resilient, social, resourceful, and quick to adapt behavior and physiology.

For humans, mice and rats are pests. They spread disease, raid our food supplies, chew on electrical wiring, and infect us when they bite. And they make us scream like kids and jump on the counter. They do have two positives for humans. First, humans use them extensively for animal testing because their genetics closely resembles our. Their organ response is similar to ours. They can be quickly and cheaply bred (and inbred) in controlled populations and large numbers. 95% of all animal trials done by humans are on mice and rats. Because mice are generally considered pests and more problematic than cute, we do not feel too guilty for testing them, and subsequently euthanizing and dissecting them to learn more about science. We use them to perfect products that make us more comfortable. Mice and rats are as integral to the biotech and genomic revolution underway as computational power, machine learning, and AI. Mice are more likely to adapt and thrive in the coming AI revolution better than humans will. Also, it is a low probability that they will become addicted to tweeting garbage or posting obnoxious bullshit on LinkedIn.

The second positive for humans is that mice eat cockroaches. Any creature that eats cockroaches adds value.

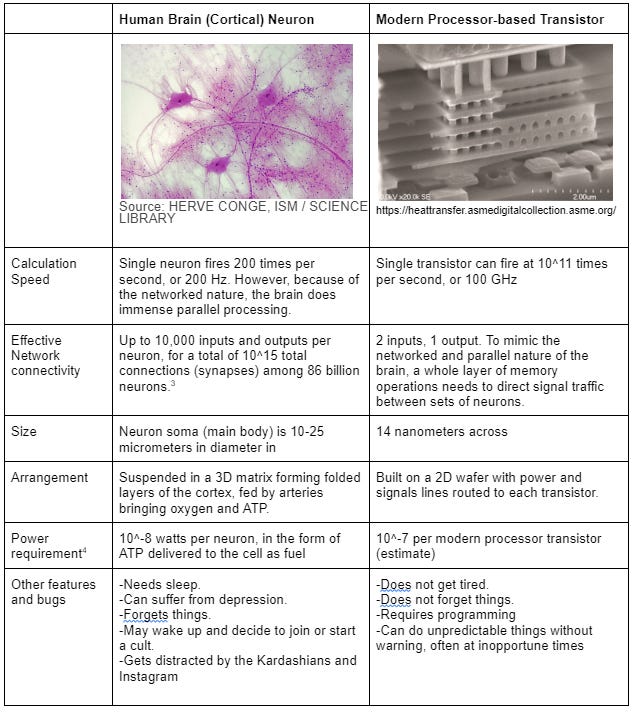

Number of neurons in the human cortex and number of transistors on a processor are not directly equivalent. The biological neuron, with up to hundreds of inputs and outputs, is a far more capable signal processor. The transistor, the building block of the modern computer processor, has two inputs and one output. It requires a maze of layered signal traces to mimic the networked structure of the human brain. The human brain has almost 10^11 neurons in a 3D suspension, fully networked, routing signals through a 3D matrix. For comparison, Apple’s M1 chip today has 114 billion transistors in an area the size of two quarters.

Neurons vs. computers is a deep rabbit hole. In part II, we’ll look at the key hardware technologies which might enable Moore’s law to continue. These technologies include neurotransistors, quantum computing, and the race for supercomputers. Reaching the singularity will require 10-20 more Moore’s Law cycles, resulting in significantly more processing power. This processing power will drive algorithm improvements in machine learning, neural networks, and deep learning, which will eventually lead to the singularity. It’s worth reviewing the three levels of AI:

Artificial narrow intelligence (ANI), which has a narrow range of abilities;

Artificial general intelligence (AGI), which is on par with human capabilities;

Artificial superintelligence (ASI), which is more capable than a human.

In 2022, we’ve achieved ANI in many areas: chess, self-driving cars, UAV and drone operations, high frequency stock trading, and a variety of knowledge worker tasks from radiology to sales forecasting. We have not achieved AGI, and will not until we are close to the singularity. I expect it will be difficult for us to recognize an intelligence more capable than ourselves, partly due to arrogance and denial, and partly due to the ant-on-the-staircase-problem. Imagine you are an ant crawling up the grand staircase on a cruise ship. For you, life is either vertical or horizontal, a path marked by pheromones, and something is either transportable food crumbs or it is not. If a swanky cruise ship guest tried to explain to you that you’re on level 3 of 10, and that the ship will arrive in Turks and Caicos tomorrow, and the casino will be closing early, you would not know what to do with that contextual information. Crumb. Pheromones. Vertical. Horizontal.

Let's leave the singularity here and take six cuts at what life will look like when the singularity does come. And it will, assuming we don’t nuke ourselves or boil our home first.

CUT 1: The year is 2040. We start in a 7th grade science class at your local public school.

Teacher [human]: Alright class, it’s time to put on your VR headsets. Go through the blue door this time and when you are ready, climb into the micro submarine with your pod mate. We’ll be going into a normal human skin tissue cell. I’d like you to find these structures you see on the heads up display… please spend some time watching them and figure out what they do, and make sure you actually go into the nucleus…

The teacher watches on a screen as 10 groups of 2 students flash green, showing they’ve gotten in their virtual pods. On her command screen, the pods shrink virtually and move into the cell. The kids, steering the virtual submarine pods, are poking around in full 3D as they explore cell structures.

Later on that day, they are a fly on the wall in Galileo’s study in Florence. He’s grinding glass lenses by hand, polishing them with a cloth. He’s having a discussion with his disciples. Duke Cosimo II de Medici comes around, asking what Galileo plans to observe tonight. The teacher fast forwards to that evening. Galileo has his eye up to his telescope, describing what he sees. He invites you the student to have a look. He then explains what he thinks is happening about the orbit of moons around Jupiter, which in turn orbits around the Sun.

Later that day in English class: “Your homework tonight is to visit Martin Luther King Jr. in his Birmingham jail cell and ask him about the letter he is writing. Tomorrow, each of you will write an essay about what you learned from Dr. King. Should we tear it all down from the outside, or should we fix what we can from the inside? Ask him yourself.”

CUT 1 Commentary:

The idea of a Star Trek style holodeck or a Matrix-style world on demand is not new, nor is meeting historical characters. It will be a VR headset and a voice command away. Instead of reading about something, you can see it first hand. Wordsworth can read his poems to you while standing in a field of daffodils together. The Magic School Bus idea above is not too far-fetched. We should accept it as a far more compelling way to learn ideas than from words and diagrams on a 2D page with a word processing limit of 200 words per minute for a 7th grader. Education will be personalized in pedagogy. AI will quickly assess how you learn from when you are toddler and feed you information in different ways until it figures out the fastest way to fatten your brain up. You might even choose to have a brain implant on your cortical surface to measure how much brain activity is registered with each stimulus. This will help the AI figure out how to stimulate which parts of your brain and confirm that external knowledge is getting through to you.

Let's admit that today's education system is suboptimal at best. A highly-trained, conscientious well-meaning human stands in front of 20 students. He or she has a list of concepts and neural connections that she needs to make in as many of these students as possible in a set amount of time. Hopefully, this teacher is trained in the standard best pedagogy to teach a concept. Let's say we're teaching fractions today in math class. Our teacher starts by going through standard examples and drawing pie charts of 1/2… 1/3… 1/4. She knows the students that are getting it. She sees the middle third that will soon get it with more examples. She sees the third that are struggling, or zoned out. She comes at fractions a different way with jelly beans. She then tries a different way by cutting rope. After each of these new methods she's taking a mental accounting of how many students have now made the right neural connections. Time is ticking away and she needs to make a judgment call of how many more students require how many more attempts for it to stick. Times runs out. Students are hungry and unpack their lunches. For some fraction of the class, fractions are out of reach for today. It’s the same fraction that’s been struggling with other math concepts… for years.

Imagine a different scenario where in the same 40 minute block each student has put on a VR headset. Their math tutor, an avatar of their choosing, is asking them questions about slicing this cherry pie in front of them in half. The students need to grab a virtual katana and slice this virtual pie In half. Their tutor (possibly Harry Potter or their favorite Minecraft YouTuber) is challenging them with more difficult concepts in fractions. Do it correctly and Harry or Hermione casts a spell for them, or Preston gives you a Minecraft tip. Some kids are crushing it. They're slicing 12 pies into 7 parts. Other kids are still slicing 2 pies Center into 4 parts. The AI tutor figures out where each kid’s foundation is to learn fractions and shores it up before moving to the next level. After 40 minutes, every child has 40 minutes more advanced in fractions in math. No child feels frustrated. The teacher downloads a report from all the AI teachers, and makes a note of which students should focus on fractions during their free learning block at the end of the day.

These adaptive learning programs exist today. They work well for math where skills are building a pyramid foundation. While this method of learning might seem anti-social, we should acknowledge that when it comes to learning hard skills, an adaptive learning program, personalized to our learning style, is more effective than waiting for a single human to deliver the pedagogy that best speaks to us. Kids will still need to go to school to socialize, learn to trust their peers, put up with bullies, appreciate diversity, develop their confidence, and check their ego. AI will never replace a good game of freeze tag, off the wall, or capture the flag. AI will not teach our kids empathy and patience, or the nuances of lunch table politics.

Spoiler Alert for Part II: We’ll explore the latest in ed-tech, and the latest in virtual reality technology and virtual worlds. In Part III, we’ll look at sandbox computer games that can teach complex things like spaceflight dynamics in digestible and fun ways for anyone interested. These games are the future of hands-on, minds-on learning.

CUT 2: You are a middle- management supply chain knowledge worker in a fortune 500 company. With advancements in enterprise systems functional AI, 40% of your knowledge workforce has been laid off in the past 4 years.

Priscila [AI]: Becky, you might want to lean in on this one. Last week you presented data on why production plan scenario 2A was a good idea and everyone agreed. It’s unclear why the sales team are backing off now. I’ll do a quick check of the actual sales numbers for quarter to date.

Becky [human]: Understood Priscila [AI]. Something may have thrown their model off. Please check with the factory AI in Europe to see if they have raw material to shift over to forecast scenario 2C. Let’s figure out how much revenue we can still make in this quarter.

Priscila [AI]: Marguerite [AI] from EU ops says that they have enough inventory on hand right now to run 70% of forecast 2C, and with the purchase orders on order, can cover 100% of the forecast by the end of the quarter. Should I run the numbers and create this slide?

Becky [human]: Yes please. Let’s bring it up later if the sales team doesn’t bring it up first.

Sales team [human]: Becky, you might be wondering why we've changed our position from last week about the forecast. we were surprised to see this from our sales AI, Ben.. Ben [AI] was sure last week that all the data confirm the demand plan that we presented which led us to production plan 2A. This week we're starting to see wrinkles in orders and getting strange signals and order cancellations from our customers. We suspect our competitors are either sitting on high inventory and cutting prices, or are starting a longer game to win back market share. We've directed 75% of Ben's [AI] time and 25% of our marketing intelligence Laurent [AI], to see if they can figure out what is going on. we'll know more in 3 days time. we agree with shifting to forecast scenario 2C as our best chance to save the quarter. Priscila [AI] calculates that we can hit our top line revenue target with 90% confidence. Can you have Priscila [AI] calculate our bottom line margin hit, assuming we switch to 2C this Friday?

Becky [human]: Yes. Thank you for the confirmation. We’ll make preparations now to switch to 2C. We expect little or no margin hit from switching to 2C, with this much lead time. Priscila [AI] will confirm. Look forward to seeing what Ben [AI] and Laurent [AI] come up with, and if we need to alter our medium term plans. Glad we caught this in time for a course correction.

CUT 2 Analysis: With AI, companies are moving faster and more aggressively. The companies that can sense changes in the market and quickly adapt their operations to exploit it will survive. The others will eventually die or be acquired for their assets, not obsolete people or processes. If you’ve worked with the clunky enterprise data systems of today (SAP, Oracle, Salesforce, FIS…), or tried to implement and debug one of these systems, you might think that AI will take 100 years to become smart enough to actually add value. Today’s systems are transaction trackers, with some algorithms built in for forecasting, planning optimization, and simulation. They require a good amount of contextual knowledge and experience to know what data you need to make a decision, and then how to find it in the system. The interfaces are not intuitive, and legions of implementation consultancies are waiting to charge you money to fine tune your workflow.

Because of our reliance on these legacy systems (SAP, Oracle), and the high costs and effort of switching, many big companies are stuck with these dinosaur systems. Smaller startups and mid-size companies will be more accepting of newer software suites which can seamlessly integrate more data across functions, and provide more integrative analysis for users. These companies will rely on AI to keep their data clean, and learn to trust the recommendations of their machine learning algorithms. They’ll spend less time on the transaction and data hygiene, and more time on substantive analysis and acting on timely intelligence. They’ll be leaner, faster, and more focused. The AI will train a cadre of functional leaders who understand how different functions work together to respond to changing business conditions, unlike the deep silos of large companies today. These knowledge managers will be both broad and deep, and eventually bring their skills to the functions of dinosaur companies willing to listen because they know they are struggling to survive.

Spoiler Alert for Part II: As artificial narrow intelligence (ANI) takes on more complex tasks, it will render a large number of today’s computer bound office workers obsolete. We’ll look at what advances will happen in automated knowledge work, and which job families will no longer require humans to move rock piles of data. We’ll look at the latest functional AIs in a business context, and how they might coexist with humans.

CUT 3: Changing Bedpans and Moonlighting as MMO Warlord. In 2040, many of the 71 million baby boomers (70-94 yrs old) in the US will require elderly care, either in home or at a center. That’s a lot of bedpans to change. You are a nursing home worker who has shifted into healthcare after your knowledge worker job was rendered obsolete by AI, or cut as cost savings by a smarmy McKinsey consultant AI.

“Your hair is looking beautiful today. What’s your secret? Can I get you anything else Mrs. Wendell?” You change Mrs. Wendell’s bedpan, mattress pad, and do a quick wipe. You rub Mrs. Wendell’s nether regions with Desitin to prevent swamp crack.

In your earbud, the Nurse Jackie[AI] chimes in: “Ask Mrs. Wendell about her granddaughter’s piano recital. It was something she was excited about last week when she holo-chatted with her son’s family.”

Oh shit. Literally. Some of Mrs. Wendell’s poop escaped the mattress pad and soiled the sheets. Nurse Jackie [AI]’s video sensor saw it too, so you know you have to change it, or Nurse Jackie [AI] will have some words with your shift supervisor. You help Mrs. Wendell settle into her recliner and begin changing the sheets. 3rd time today. This gets old. While you are changing out the pillow cases, your Napoleon Dragonfire Gamemaster AI chimes in your earbud, letting you know the day’s latest developments in your MMPORG world. [Boomer translation: MMPORG is Massively Multi Player Online Role Playing Game] In this virtual game world of Napoleon Dragonfire, the year is 1807, and the Prussian Army has increased their mercenary forces and now has 63 dragons, of which 28 can fly with incendiary weapons. Your Spanish allies are concentrating on building heavy infantry with rock trolls units. The English have researched steampunk reconnaissance balloons that can soar over the battlefield. You get messages from your friends suggesting battle plans and requesting orders. In the virtual realm of Napoleon Dragonfire, you are a Major General of the 3rd Coalition on third shift. Hundreds of people you’ve never met in person respect your strategic skill and take orders from you every night between 0400-1000 GMT. They don’t know your real name, or that you are changing a shit-stained sheet right now. They do know that if you lead your team to victory, they will be able to cash in on the crypto and time they’ve staked in your leadership. You start thinking through your tactical positioning of units for the evening battle to come. Many people have put their trust in your record.

“Mrs. Wendell, would you like to get back in bed, or perhaps a stroll outside and have some decaf tea with Mr. Bessemer?” Mrs. Wendell opts to get back in bed. After you leave, Mrs. Wendell dons her VR headset, which re-simulates the holo-chat from last week. She’s in her son’s living room, which is the house she lived in for 50 years. Her granddaughter is at the piano, and playing Eine Kleine Nacht music. The same song her son used to play. As she takes part in this simulation, her cortical activity peaks and her brain implant array shows stronger activity in previously quiet cortical sections of neurons. This regimen of nostalgia and event reinforcement is helping hold off her onset of Alzheimer’s symptoms.

As you walk down the hallway towards the laundry, your augmented reality glasses start displaying tactical charts of the terrain that you plan to lead your regiment in tonight.

CUT 3 Commentary: I can only imagine how this came to be. Sometime in the next decade, the shortage of home health care nurses and elderly care workers will become critical. Business AI will not yet have rendered them obsolete, and many knowledge workers are unable to transition their identities to bedpan changers. Fortunately, many of warehouse workers from e-commerce giants were replaced by robots, and shifted to contract in-home healthcare work. Silicon Valley incubators see this worker gap a critical challenge for society. They sponsor incubators and hack-a-thons to solve this problem. Somehow, changing bedpans and wiping bums becomes seen as the hardest of tasks, and lo-and-behold, the “poop-tech” industry is born. If you can design a robot to gingerly wipe great auntie Kelly’s bum, then you can design a robot to do all easier care tasks. In the 2030s, hardware startups led by Gen-Z founders get funding, and all sorts of robot prototypes are changing diapers and bedpans. There’s only one problem. While most 38 year old Gen-Z startup founders would prefer to have their asses wiped by a robot rather than another human, most baby boomers over 70 don’t trust the latest robo-shit-wiper that rolls into their room and asks if they’ve done a number two with a creepy and sensuous digital voice. They still prefer a real human, who might ask them how they are, and might even care how they are.

Elderly care centers able to afford human staff become more prestigious and desirable. Poop-tech does make its way into many lower tier hospitals, and hospitals with captive audiences like military and VA hospitals. They offer reliability and consistent ass-cleanings. For those who can afford it, they choose human bedpan changers, as it’s a litmus test to prove that the place still has a human touch for your loved ones. Or so we hope.

Explanation for Boomers Who Haven’t Played MMPORGs or MMO (Massively Multiplayer Online) Games

Most Boomers are familiar with Dungeons and Dragons. Gen Z might not be. Explanation for Gen Z: Dungeons and Dragons was released as a fantasy role playing game in 1974. It contained a set of rulebooks for a game master to facilitate games with friends. Several player friends would design their characters who would each acquire special powers according to the rule book. The game master would walk the players through a quest or mission, and the players would interact with the world, and each other, according to the rule book. This was, and is, the nerdiest of nerd bait. Some players show up dressed as dragons, dwarves, or wizards to roll their 27-sided dice. Back to MMPORGs and MMOs…

By the mid-90s when PC gaming started to get good, fantasy role playing games went digital. By the late 90s, they were hosted online with servers, and hundreds of players could interact on the same map. The game master was now the software, governing rules of spell-casting and the damage of swinging an enchanted war hammer. Today, MMPORGs are expansive and immersive. Thousands of players can interact in a Star Trek or Star Wars universe, any number of fantasy worlds, and in niche simulations like the age of fighting sail in the late 1700s. You can join teams or run around as a lone wolf, collecting resources, increasing your abilities, joining quests, and accomplishing missions to increase your team’s standing. Teams are serious, and players invest time and money into their characters. Some MMOs are mundane and pedestrian, such as Second Life. An elderly white man can take on the persona of a young Hispanic girl, and live an entire second life in this virtual world. Creepy, but why not? It may be far more interesting than his first life. Over 2 millions players are active in Second Life. In 2017, MMOs (and Massive Online Battle Arena) games accounted for $25B worldwide, or 25% of all gaming revenue. In 2021, MMOs and MOBAs grew to $42.2B. the MMO market is expected to grow at 10% per year in the next 5 years with 48% of the market in Asia (China and India).

Spoiler for Part II: We’ll look the kinds of jobs that will still require the human touch after the singularity. We’ll also dive into the future of MMOs worlds, made more vivid, visceral, and addictive with virtual reality. We’ll examine how the game economies might work, and you could translate your MMO skills and winnings into real money in your first life. This is not all (generally) harmless fun and games. For every Fortnite or World of Warcraft universe, there will be some kind of demented virtual world on the dark web for troubled people to act out their fantasies as plantation owners in the pre-Civil War South, or as SS guards at Auschwitz. We will deepen the political divide if people spend more time in divergent realities and define more of their identity from their roles in them. The more compelling and immersive these games and worlds are, the more they can expose us to social engineering by others. .

CUT 4: Augmented Reality and the Pipeline Welder. In the 2030s, many of the states west of the Mississippi River have suffered severe droughts affecting our national agricultural yields for grains, peas, and cattle. Global warming is the culprit, as weather patterns have shifted over the past 10 years. While our weather AIs have become much better at predicting local weather 2-3 weeks out, our models are not able to characterize the long term regional effects of global warming and climate change. We don’t have enough detailed data to learn from and train our AIs. As a stopgap measure, we’re building a national pipeline to pipe water from the Mississippi and Missouri Rivers to these states. This is a thousands of miles of 72” inch diameter steel pipe, buried underground as much as possible. Meet Mark, our uber-competent jack-of-all trades foreman who has been called in to get this project back on schedule.

Mark Steps out of his truck and on to the muddy field in Nebraska. It’s a hot and humid July day. He walks by the recent joints, fingering the welds and looking at the bottom curvature where it is most difficult to lay a clean bead. Not bad. Before he walks up to meet his crew, he puts on his welding helmet and activates the heads-up display. An augmented reality overlay displays live data collected from the job site AI. While Mark has worked hundreds of pipeline jobs and studied this specific production plan on his flight out, he needs to quickly get the lay of the land. He needs to know his people, equipment conditions, and environmental factors. He needs to spot safety issues before they become a problem.

On his status readout, he understands that welding the active joint began 10 minutes late, but by watching the live video of the welder, he knows that time can be made up in the next 25 minutes. After years of walking onto sites, he knows it is best to start getting to know the least busy and least stressed teams. He’ll head over to the rigger crews operating the cranes and get the scoop for 10 minutes. From there, he’ll talk to his Weld Crew A team and quality engineer. By then, the root pass for the weld joint should be finished, and he’ll follow the quality engineer into the shield shack for inspection.

After making his introductory rounds for an hour, Mark has dictated a list of notes to his personal AI assistant. The backup 10KW generator needs maintenance. Electrical safety and cable runs need improvement. Welding consumables (tips and rods) need to be restocked in 2 days. Safety and first aid equipment should be inventoried, and the defibrillator battery checked. Weld Crew A’s combined experience is adequate, though Rigger Crew B’s lead is relatively green. It’s early into the shift, and the team is looking chipper for their new site boss. They have their safety equipment on and it seems the previous boss didn’t cut corners. Alex Poe looks hungover, or may have a cold. Best to check on him later. The crews seem hydrated, but best to bring in two cases of Gatorade on ice for tomorrow- one for each shift. There’s always one guy who forgets to hydrate…

Mark gives himself 6 hours before sunset to earn trust with his leads, make his standards known, fix some deficiencies from his walkthrough, and set the plan for tomorrow’s work. His management wants him to increase to 12 sections per day over two shifts. With 15 hours of summer daylight and a well-oiled crew and cooperative weather, it’s possible. The best that this combined crew has done is 9 sections. 12 sections per day is totally achievable once the teams see how to mesh their trades like clockwork. Mark gives himself 2 weeks to achieve this.

CUT 4 Commentary: While someday, cross-country pipeline construction may be completely automated, it will likely still require humans in 2040. Mark is a critical human in the loop. He manages the skills and efforts of specialized workers and makes risk-managed decisions to achieve aggressive goals. Cut 4 is about how to provide streamlined data to experts like Mark to make them most effective in the real world. To do so, the AI needs to collect broad and relevant data, know what is useful to Mark, and present it to him in an elegant, non-overloaded way. 90% of the information incoming to our brains is visual. We process this information 60,000 times faster than we process text. Of the 16 billion neurons on our cortical surface, 4-6 billion neurons are dedicated to processing visual information. (Note that another 69 billion neurons are in the cerebellum, responsible for movement, balance, and coordination.) To be efficient, we need to overlay targeted and critical details to our fastest mode of information intake: this is augmented reality.

Spoiler alert: In Part II, we’ll look at the latest trends in augmented reality, and how it can make skilled experts even more capable. We’ll explore brain-machine interfaces and some breakthroughs and challenges. If humans are to keep up with AI, we need to increase our rate of information flow, or at least make it extremely efficient for the information to get through our skull and into our neurons.

CUT 5: The Chinese Central Committee Subcommittee on Grand Strategy. As corporations will apply AI to streamline their own operations and sense changes in the markets, governments will do so as well.

Note: this section is not to demonize China or portray them as aggressive. All major powers will seek advantages with AI. China is investing heavily in AI and supercomputing. Combined with a central command structure, a control over companies, and a massive data collection infrastructure, China will probably lead the world in AI policy execution. Militaries, intelligence agencies, and diplomatic corps will also employ AI to get ahead. The following is one example of how this might play out.

General Zhu [human]: “Chairman, for the past month Sun Tzu [AI] has been running different scenarios on how we should manage our rare earth metals. The results are… unconventional.”

Chairman [human]: “Do explain, General Zhu.”

General Zhu [human]: Sun Tzu [AI] has run the scenario 185,000 times, and determines that we will reach our inflection point for global leverage with our rare earth metal stockpiles in the next 3 years. The West is starting to ration their own stockpiles and we think they will continue to invest in new mines and refining capability, reducing their dependence on our supply. For their own quantum computing developments, we expect they’ll need a lot more niobium and ytterbium. We still hold 35% of the world’s known reserves, and 85% of the refining capacity.”

Chairman [human]: “We’ve known this day would come for a while. What does Sun Tzu [AI] propose?”

General Zhu [human]: “We assess the US and India will figure out in the next 12 months that Niobium and Ytterbium-coated cubits are what have allowed us to get ahead in quantum computing, and they will scoop up the rest of the global supply as a strategic material. Sun Tzu suggests a three fold strategy: First, we need to sabotage the purification and refining operations in Brazil of Niobium. Our state-controlled companies installed those machines in 2032, and we continue to service them. We can put a firmware bug to let impurities through and also spoof the spectral analyzers on outgoing inspection. Second, we need to ramp up our economic influence over Vietnam, so they don’t offer their Ytterbium reserves as a relief valve to the West. Third, we need to create a disinformation campaign to convince Intel, AMD and TSMC that Europium is the key ingredient to our quantum cubit performance, and not Ytterbium. We need to buy up global Europium and create a demand panic.”

Chairman [human]: “Thank you General. Please have Sun Tzu [AI] submit his report to the Central Committee, and we will consider this policy in our next economic grand strategy review in three weeks.”

CUT 5 Commentary: Rare earth metals are a fascinating case study of how great powers compete for resources in the 21st century. China has the most rare earth metal deposits of any country, at 37% of total known reserves, but has invested in 95% of the global refining capacity. They have been the primary supplier of pure rare earth metals to the rest of the world. Rare earth metals are critical in many electronics technologies, including processors, lasers, motors, batteries, magnets, and solar panels. The Chinese government has brought its six rare earth mining and processing companies under its state influence and controls it as the world’s dominant cartel.

Spoiler alert: In Part II, we’ll look at how major powers, including China, are investing in AI. Under responsible institutional control, AI might detect the next pandemic before it spreads. It might lessen food distribution inequality and improve basic public health. Alternatively, AI can suggest outlandish strategies to daring groups to disrupt established systems. Networked, centralized systems become vulnerable to hacking and cyber warfare.

We’ll also examine how algorithms gone wild can muck up established systems, as happened on May 6, 2010 during the “flash crash” where the US stock market lost $1 trillion over 36 minutes. In 2015, someone tried to weaponize what happened during the flash crash to deliberately sabotage markets for gain. Bad actors with AI can probe complex systems for loopholes, spoof them with noise, and make off with vast treasure like bandits, or cause a lot of trouble.

CUT 6. Social media is still making us do stupid things. In 2040, people are still taking glamor shots of themselves and putting it on Instagram. The selfie-stick is still a thing, as is the doting Instagram boyfriend-photographer. On FoMo, the latest iteration of TikTok, you can see the biometric and neuro electrical and chemical activity of its users. Social media star and influencer True Thompson (Khloe Kardashian’s daughter with Tristan Thompson for those of you keeping up), is trending on FoMo. On its sister site MoFo, the latest iteration of Instagram, people are posting awesome pictures of themselves. What we would call deepfakes today, are the ego-boosting inspiring pictures that people craft of themselves for likes. Here's a video of me doing a one arm pull up. For Throwback Thursday here's one of me going on a date with Justin Bieber in 2005.

Spoiler Alert: New versions of social media will not make us any happier. They will only ask us for more intrusive data. Humans will still suffer from vanity and insecurity, and feel the need to keep up with the Jones’. Social media will not help us. It will enable companies and organizations to further social engineer us.

AlphaGo: An AI Wake-Up Call in 2016

Source: Lee Jin-man / AP. In March 2016, Lee Sedol defended humanity against an AI. He lost.

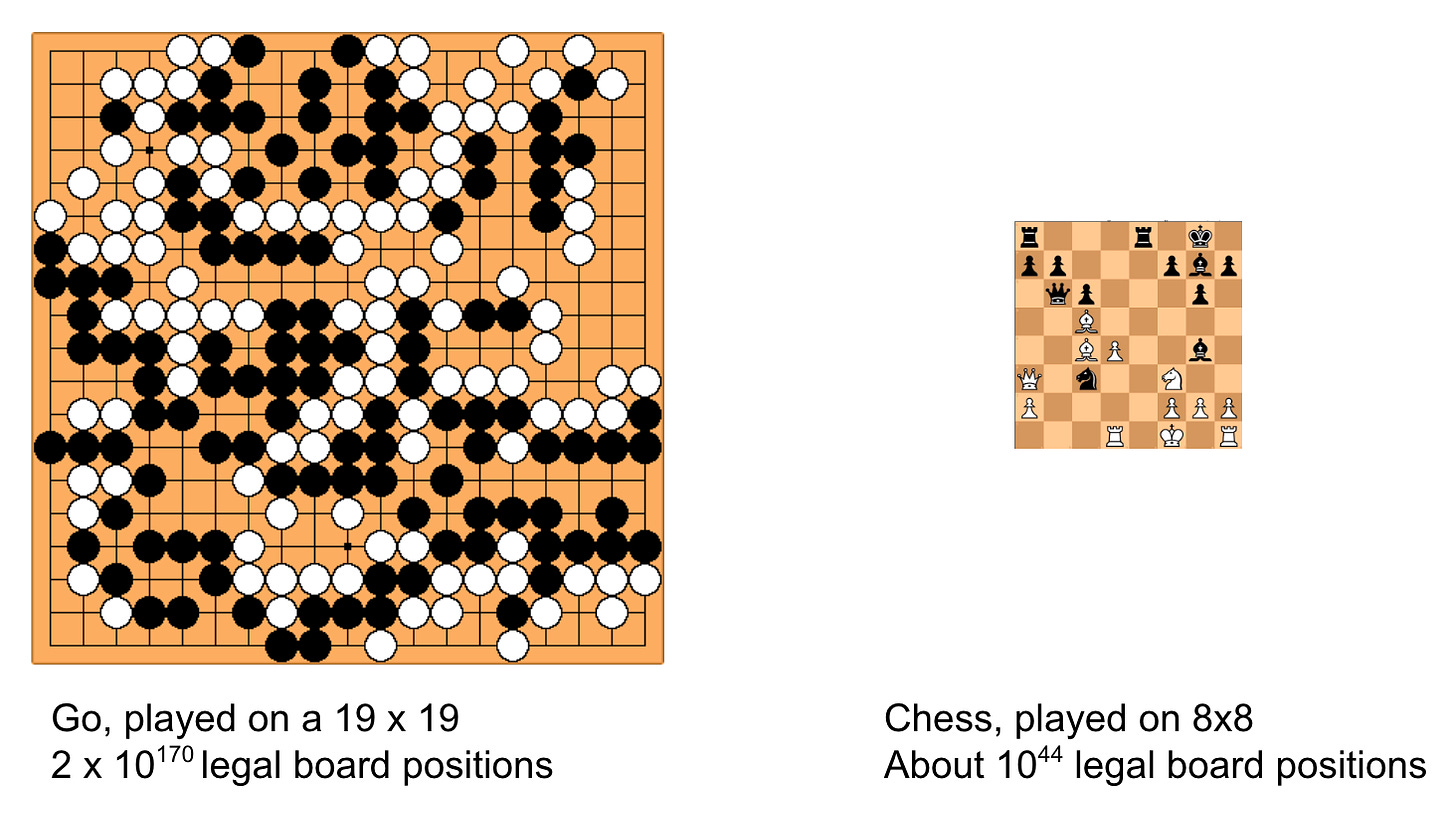

In high school, I learned how to play the board game go. Our chess club coach taught us on the side, and we spent more time playing go than chess. The game originated over 4000 years ago in China, and is considered the oldest board game in the world. Players take turns placing stones onto a 19 x 19 grid with the goal of capturing the most area. Area is captured by surrounding your opponents stones, and also marking off territory until both sides agree that it is yours. The game ends in a funny way- when both players agree to pass: All the territory is marked off, and no further territory will change with more stones played. Better to save time and start counting up territory. There are only a handful of rules that govern legal rules. Some say it takes about 20 minutes to learn go, and 200 years to get good at.

Chess, by comparison, is a much simpler game to play, even though it has more rules. It is less elegant in that sense. Because of the size of the go board, there are a lot more possibilities for each move. It is difficult to brute force calculate moves in advance. Go players need to be methodical and disciplined when it comes to tactics, but also have deep strategic intuition. The line between tactics and strategy is quickly blurred.

Chess programs have been defeating good human players since the late 1980s, and defeated the best human player, Gary Kasparov, in 1997. Go players felt that it would be nearly impossible for a go program to beat even mediocre players. In the 2000s, most go programs were only suitable for training beginners and tactical exercises. The professional go community felt it would take decades for go software to give humans a run for their money. Part of what makes go so difficult is the sheer number of possibilities for each turn, and how difficult it is to describe, much less quantify the merits of a good strategy. Even have played amateur games and watched replays of games by masters, I often struggle to figure out who is winning, or why masters played the moves they did. Scoring board position is subtle and much more difficult than chess.

In 2016, UK-based AI company, DeepMind, developed a go program that soundly beat the best human player, Lee Sedol, 4-1 in a five match series. This was a wake up call for everyone, not just because a computer defeated a human in a deeply complex game, but how the AlphaGo program did it.

Chess programs are relatively straightforward in concept. At the beginning of the game, white has 20 legal moves. Each one leads to a board position that can be scored based on a number of criteria. Black then has 20 moves to make, each one leading to a board position. The chess algorithm then plays out a number of moves into the future, and calculates the strength of the resulting board positions. It assumes your opponent will make the move that maximizes their board position. Chess is relatively easy to come up with a set of criteria to score board positions, and the number of legal moves is reasonably limited. Once computers were strong enough to calculate enough moves into the future, even Gary Kasparov couldn’t keep up. Insight and experience can refine the decision tree of good moves, but DeepBlue won by brute force algorithm.

The DeepMind team was founded in 2010 by an AI researcher and former youth chess champion, Demis Hassabis. DeepMind aimed to teach software to learn things on their own. Instead of programming a computer to play arcade games with nests of if-then statements, DeepMind took a more fundamental approach. Feed the software screenshots of the basic arcade games including pixels of scores and lives left, and let the software figure out how to run up the score and stay alive as long as possible. The more the software played, the more clever it became. It did not take long for the software to master games and come up with clever tricks and exploit loopholes in simple arcade games.

Around 2013, DeepMind started getting interested in go. By this time, there was enough computational power to brute force the sheer complexity of go. They brought on a few professional go players and combined both the traditional chess software approach (branching tree analysis of possible moves, board position scoring) but also the arcade game self-learning approach. After AlphaGo was functional, they fed it with 100,000 go games to learn from. AlphaGo then spent months playing games with itself, further testing and refining strategies. AlphaGo’s human masters watched as it got more clever and did more unexpected things, backed by calculations of win probability percentages. The development team tuned AlphaGo’s playing style, aware of some biases and gaps that came up. They also came up with clever ways (Monte Carlo tree search and neural networks) to focus computer processing power on likely good moves rather than all possible moves.

In March 2016, DeepMind invited the best living go player, Lee Sedok, to play five games with AlphaGo. Lee was 33 at the time, but had already played 21 years of professional go, having turned pro at the age of 12. He’d racked up 18 international titles, and is considered one of the best humans to ever have played the game. Most commentators expected Lee to dominate. These quotes give a flavor of the match:

Pre-game interview with Lee Sedol, who is genuinely humble despite this quote:

“I am confident that I will win 5-0” “I believe that humans are still too advanced for AI to have caught up”

Lee Sedol after losing game 1:

“I didn’t think that AlphaGo would play the game in such a perfect manner.”

Lee Sedol after seeing AlphaGo’s move 37 during game 2:

“I thought AlphaGo was based on probability calculation, and it was merely a machine. But when I saw this move [37] I changed my mind. AlphaGo is creative. This move was really creative and beautiful.”

Lee Sedol after losing game 2:

“AlphaGo played a nearly perfect game",[49] "from very beginning of the game I did not feel like there was a point that I was leading.”

Lee Sedol after losing game 3:

“I want to apologize for being so powerless… I can’t believe this is happening.”

Lee Sedol after winning game 4:

“This victory meant that we [humans] could still hold our own.”

Lee Sedol after losing game 5:

“I’ve grown through this experience… and will make something out of it with the lessons I’ve

learned.”

The story of AlphaGo and Lee Sedol is beautifully told in this documentary:

In 2019, Lee Sedol retired from professional go, transformed by his AlphaGo experience: “With the debut of AI in Go games, I’ve realized that I’m not at the top even if I become the number one through frantic efforts,” Lee told Yonhap. “Even if I become the number one, there is an entity that cannot be defeated.”

While Al defeating humans in go is still classified as artificial narrow intelligence, the process by which AlphaGo and DeepMind did this is revolutionary. Instead of writing a deterministic program to brute force win the game, DeepMind unleashed AlphaGo to learn the game and teach itself. It certainly helped tune the learning along the way, but AlphaGo did its own learning. After playing itself for a few days, Alpha go was able to defeat the previous version of itself 100% of the time.

During Game 2, AlphaGo made a move [37] which surprised the commentators and professional go community. No human would have made such a move. It wasn’t a blunder, but it was… unexpected. As the game unfolded, the genius and beauty of move 37 became apparent. AlphaGo was playing an efficient game to only win by one stone, whereas humans tend to play to win decisively, requiring more stones to solidify territory. AlphaGo could play confidently along a razor’s edge in ways that humans cannot.

As of 2019, DeepMind has shifted to creating an AI(AlphaStar) to play the real-time strategy game, Starcraft II. This game is more complex than Go, with hundreds of units moving simultaneously across a battlefield. Players can decide what units to build and where to send them. Starcraft II was one of the first professional e-sports games, and is still popular despite being first released in 2010. Spoiler alert for Part III: Kids (and all humans) can learn a lot from a game like Starcraft II. When my son William was 7, he started playing Starcraft II. After a few months, he experimented and set the computer to a “Brutal” AI setting. It wiped him out soundly and faster than he thought possible. It was brutally efficient with building units and attacking movements. All William could say was “whoa.” I expect we will each have more whoa moments in the coming decades as we race our way to the singularity.